How to separate low-risk and high-risk AI tasks

Quick answer: choose one repeatable how to separate low-risk and high-risk AI tasks workflow, limit AI to bounded sub-steps, require human approval at each judgment point, and log prompts, sources, edits, and final outputs.

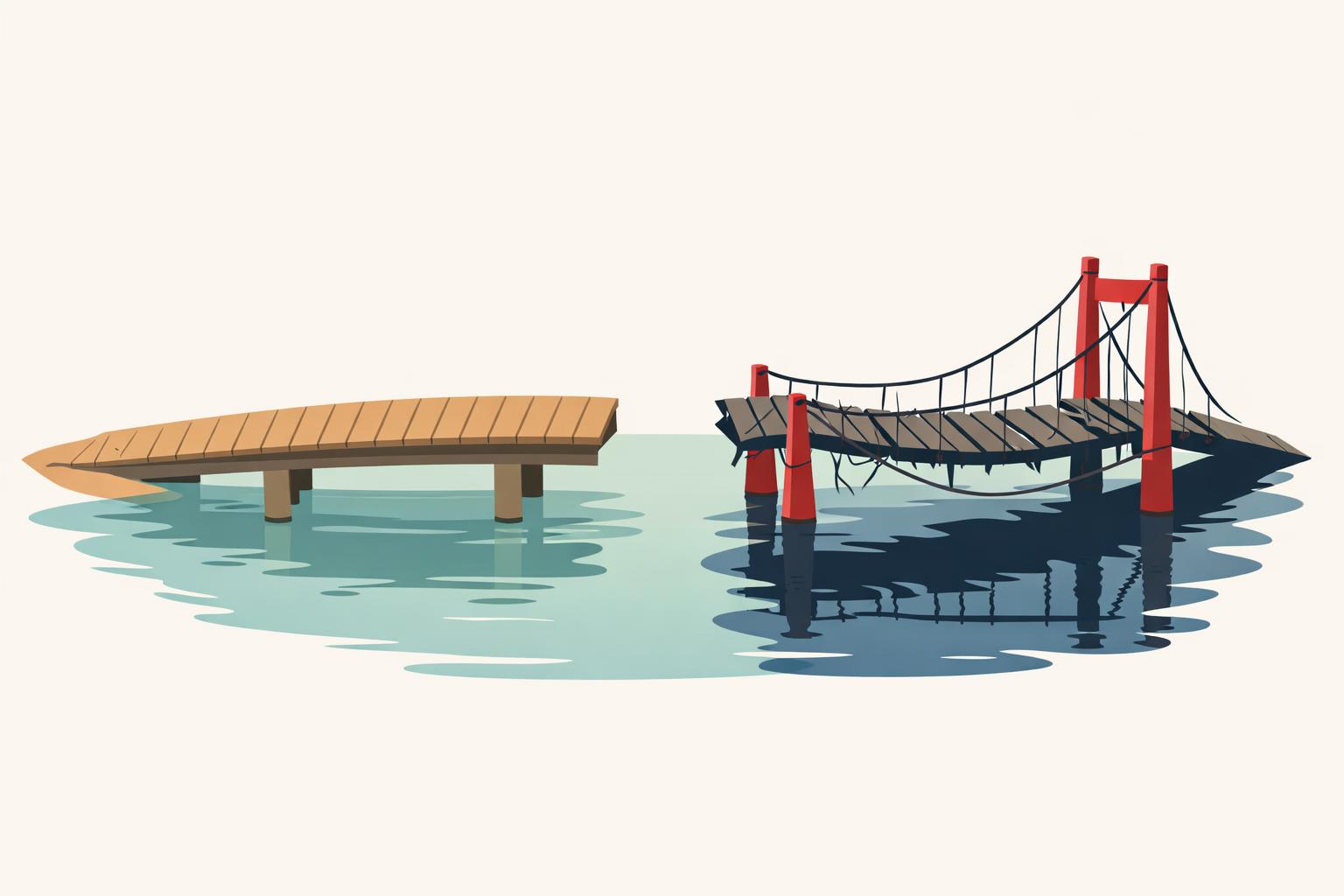

A lot of teams still label AI work as "low risk" because it looks administrative: summarising a contract, screening CVs, drafting a customer reply, rewriting a policy. That is how you end up with a policy deck that says green, yellow, red while the people doing the work still do not trust the workflow.

The practical answer to how to separate low-risk and high-risk AI tasks is to classify the task by consequence, reversibility, evidence quality, and human control. A routine-sounding prompt can still be high risk if a bad output is hard to detect, hard to undo, or likely to affect hiring, legal position, patient safety, pricing, or regulated records.

Separating low-risk and high-risk AI tasks means sorting work by what happens if the model is wrong, not by whether the task sounds simple. A first-pass draft for an internal meeting note is usually low risk because it is easy to review and cheap to fix. An AI-generated shortlist for hiring in Germany or the US is not, even if it saves the recruiter 10 minutes, because bias, documentation gaps, and missed candidates create real downstream cost. Deloitte makes the same point: low-risk tasks can move toward higher autonomy, while higher-risk decisions need calibrated human oversight and intervention points (deloitte.com).

This article gives you a practical way to triage AI tasks across engineering, marketing, HR, finance, legal, and ops, then map those calls to actual controls: review steps, evidence requirements, logging, and escalation paths.

TL;DR

- map tasks, not tools, and classify each one by consequence, reversibility, evidence quality, and human override before you approve it for AI — the same logic the NIST AI Risk Management Framework uses when it asks who is affected, how bad the harm could be, and whether a human can intervene.

- score every candidate workflow against who is affected, how hard a mistake is to undo, what evidence supports the output, and whether a human can stop the action in time; for example, a draft marketing brief in ChatGPT is very different from an automated change to a payroll record in Workday.

- review prior AI incidents and your own near-misses before expanding autonomy, using patterns from McKinsey’s AI risk management guidance and the Partnership on AI incident database to set the bar, instead of relying on vendor assurances or one-off demos.

- require stronger controls for any workflow that touches hiring, legal position, patient safety, pricing, or regulated records, even when the prompt looks routine — a contract clause suggestion in Claude or a candidate ranking in an ATS still needs review.

- separate low-risk from high-risk work by checking whether the output can be validated against stable ground truth in your real workflow, not the vendor demo; a translation or code formatting task is easier to verify than a recommendation that changes a customer-facing decision.

What actually makes an AI task low risk or high risk?

Risk starts when an output can change a real-world decision. A summary draft for an internal meeting is usually low risk; the same summary attached to a hiring recommendation, claims denial, or treatment escalation is not. Teams get this wrong when they classify by format instead of consequence.

MIT Sloan’s guidance is useful here: the real question is whether the model’s output can be checked against a stable ground truth and validated in the workflow you actually run, not the clean demo workflow the vendor showed you (MIT Sloan Management Review, MIT Sloan risk framework).

-

list tasks, not tools. map where AI is drafting, summarising, ranking, recommending, or deciding. “copilot in recruiting” is too vague; “gpt-4o produces interview summaries used by hiring managers” is specific enough to assess. This is the same shift the NIST AI Risk Management Framework pushes: evaluate the system in context, not as a generic tool.

-

score each task on four questions. who is affected? How reversible is a mistake? What evidence supports the output? Can a human override before action? McKinsey recommends reviewing prior AI incidents because similar failure patterns recur under similar conditions, and the Partnership on AI incident database shows how often the same classes of errors repeat.

-

classify by downstream effect. low-risk tasks inform work. Medium-risk tasks influence decisions but remain reviewable. High-risk tasks materially shape legal, medical, employment, or financial outcomes and should not be fully delegated. For example, an AI draft for a marketing brief is one thing; an AI screen in hiring, like the kind used in some ATS [workflows](/ai-workflows-for-finance-teams-month-end-reporting/), is another.

-

match controls to the tier. low risk gets standard QA. Medium risk gets reviewer sign-off and logging. High risk gets explicit approval, audit trails, and independent validation. That’s the difference between a content draft in Notion AI and an employment decision path that needs traceability under EU AI Act-style controls.

-

reclassify quarterly. a task can move from low to high risk without the model changing at all; the trigger is usually workflow change. If a team starts using the same summariser to brief leadership or support hiring decisions, the control level has to change with it.

| tier | what the task does | minimum control |

|---|---|---|

| low | informs a person’s work | spot checks, basic QA |

| medium | influences a decision | human review, logging, override |

| high | can determine an outcome | explicit approval, audit trail, independent validation |

Why do teams misclassify AI risk even when the policy looks good?

Teams misclassify AI risk because policy language is written around categories, while real work breaks on context and incentives.

- they classify the verb, not the workflow position. "Drafting," "summarising," and "ranking" sound harmless until the output crosses a boundary. A draft email becomes a customer commitment; a ranked CV list becomes a hiring filter; a summary becomes the only thing an approver reads. Deloitte’s résumé screener example shows the same failure mode (Deloitte).

- they trust self-reports and policy attestations. checkboxes tell you whether someone says they reviewed the output, not whether they had time, context, or incentives to challenge it. Accenture’s Responsible AI report makes the same point: principles are easier than operationalising them in day-to-day work.

- they confuse benchmark strength with contextual safety. a model can score well and still be unsafe in the only slice of work that matters. BCG’s 2026 view on AI risk management argues against one-size-fits-all reviews because risk only becomes visible when you test the specific failure modes of the use case.

What should leaders do next if they already have AI in production?

Treat AI risk as an operating problem, not a policy document.

In our work, the fastest way to find the real risk is to build a live inventory with four columns: task, owner, downstream decision, and evidence of review. That exposes the pattern most teams miss: low-risk drafting work gets routed through heavyweight approvals, while recommendation systems in HR, finance, or clinical operations pass through with weak traceability because they "only assist."

| workflow type | move fast | required control |

|---|---|---|

| reversible, internally verifiable work | yes | standard QA and spot checks |

| affects people, money, or compliance | no | human review, traceability, retained records |

| ambiguous or expanding use case | only conditionally | reclassification after observed usage review |

If your inventory comes from surveys alone, do not trust it. Teams routinely underreport shadow workflows and overreport approved ones; interviews, prompt logs, ticket trails, and output samples usually tell the truer story. Then re-check quarterly. Expansion changes risk faster than model version updates do: a marketing assistant becomes a pricing input, a support copilot starts shaping refunds, an internal summary starts feeding a performance review.

Bottom line

Map tasks, not tools, and classify each one by consequence, reversibility, evidence quality, and human override before you let AI touch it. Start with the workflows that look routine but can still create real damage if they’re wrong - hiring, legal, pricing, regulated records - and if you can’t tell where your team is already using AI safely versus just on paper, that’s usually a measurement problem that needs outside help.

Your team has AI tools but adoption is shallow? We measure it and fix it. Book a diagnostic call -> calendar.app.Google or email hi@AI-Beavers.com

FAQ

What is a good framework for AI task risk assessment?

Use a simple 4-part gate: consequence, reversibility, evidence quality, and human override. If you want something more operational, add a fifth check for auditability: can you log the prompt, output, reviewer, and final action in a way that survives an internal review or regulator question.

How do you decide if an AI workflow needs human review?

A workflow needs human review when the model output can trigger an external action, not just produce a draft. A practical rule is to require review whenever the output affects a person, a contract, money movement, regulated data, or a customer commitment.

What are examples of high-risk AI tasks in HR and legal?

In HR, candidate ranking, rejection recommendations, and performance summaries are high-risk because they can shape access to jobs and promotions. In legal, clause extraction, contract redlining, and policy interpretation become higher risk when the output is used without source citation or jurisdiction checks.